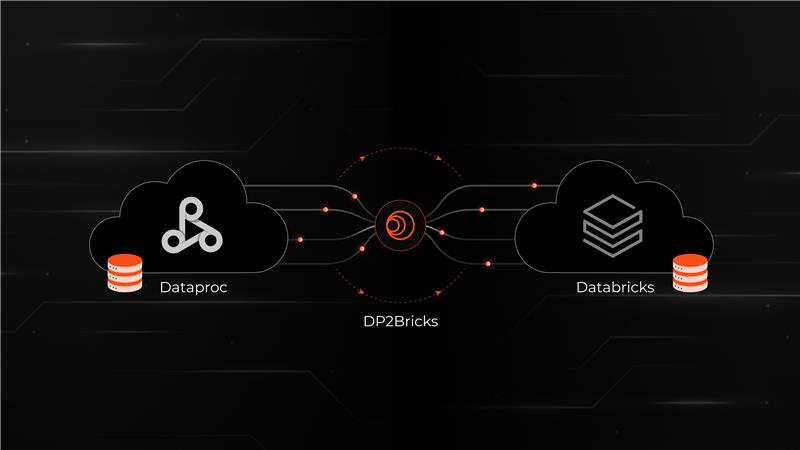

Composer2Bricks

Modernize Apache Airflow Workloads to Databricks

Google Cloud Composer to Databricks

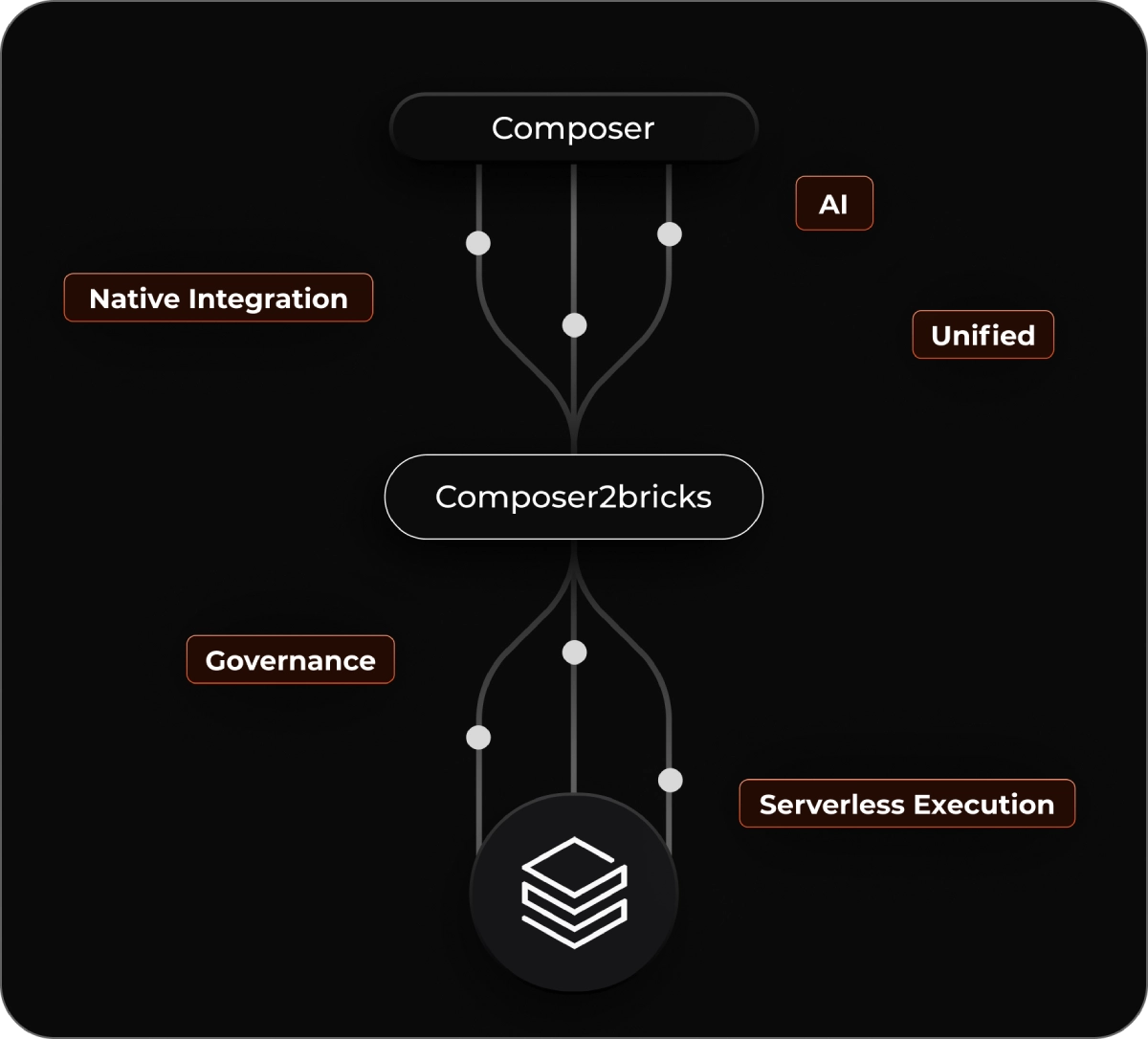

An accelerator that automates DAG conversion, dependency analysis, and environment deconstruction enabling enterprises to unify, govern, and scale with a Databricks-native approach.

Why migrate from Cloud Composer to Databricks?

Cloud Composer works well for isolated orchestration, but as DAG volumes grow, workflow drift, manual dependencies, and other factors slow delivery. Scaling becomes costly and unpredictable, with limited visibility and fragmented governance across teams.

Databricks Workflows offers unified orchestration, serverless execution, and native integration with data, AI, and governance.

Migrating to Databricks requires a structured, automated migration pathway that Composer2Bricks provides.

Customer Challenges Addressed by Composer2Bricks

Fragmented environments cause DAG drift and duplicated orchestration logic.

Manual dependency, plugin,

and environment management increase overhead.

Airflow version drift creates

instability and slows releases.

Scaling workflows is expensive and unreliable as pipeline volumes grow.

Limited visibility, lineage,

and governance across orchestration assets

No clear way to assess migration effort, risk, or timeline

Core Capabilities

Standardized DAG Conversion

Standardized DAG Conversion

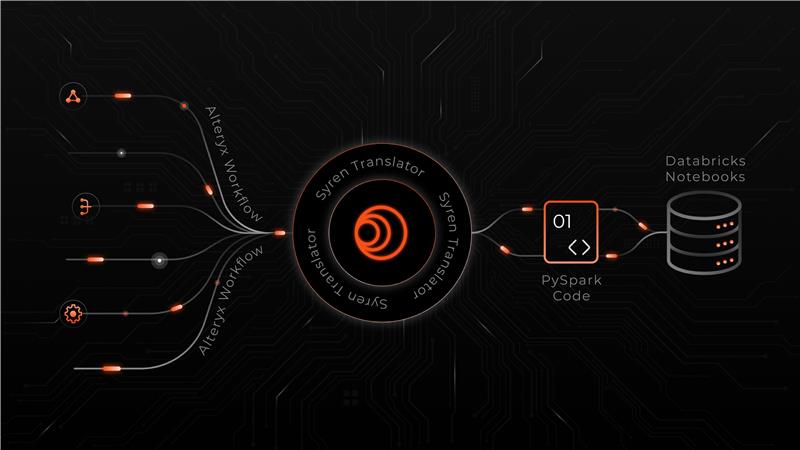

Automatically converts Airflow DAGs, operators, sensors, and task logic into Databricks Workflow equivalents using extensible translation rules.

Workflow Conversion Engine

Workflow Conversion Engine

Rule-driven mapping of scheduling, retries, dependencies, sensors, and environment configs into Databricks-native workflow constructs.

Non-Portable Pattern Detection

Non-Portable Pattern Detection

Identifies Composer-specific operators, custom plugins, deprecated APIs, GCP-bound services, and unsupported dependencies requiring remediation.

AI-Powered Refactoring Intelligence

AI-Powered Refactoring Intelligence

AI-assisted pattern detection recommends optimized Databricks workflow designs to improve reliability, resilience, and cost efficiency.

Incremental, Test-Driven Validation

Incremental, Test-Driven Validation

Executes converted workflows with task-level and end-to-end validation, comparing Composer and Databricks runs using Delta Lake.

Governance, Monitoring & Alerts

Governance, Monitoring & Alerts

Unity Catalog–based governance with lineage, auditing, and access control, plus near real-time alerts for conversion issues and failures.

Technical Capabilities

Airflow DAG Ingestion & Normalization

DAG & Operator Translation Engine

Intelligent Error Classification & Self-Healing Workflows

Governance & Metadata Alignment

Why Syren + Databricks?

Databricks-Native Orchestration by Design

Syren builds orchestration accelerators aligned to Databricks Workflows, replacing fragmented Airflow environments with a unified, serverless execution model.

Automated, Low-Risk Migration

Composer2Bricks automates DAG translation, dependency mapping, and validation, reducing manual rewrites and migration risk.

Operational Simplicity at Scale

Eliminate Airflow version drift, environment maintenance, and cluster tuning with Databricks-managed workflows.

Built-In Governance and Observability

Unity Catalog integration delivers centralized lineage, access control, and auditability across orchestration assets.

Faster Adoption of Data, AI, and Analytics

By modernizing orchestration first, teams unlock Databricks-native pipelines, AI workflows, and analytics without orchestration bottlenecks.

Value Delivered by Composer2Bricks

Automated DAG conversion, reducing manual rewrite effort.

Improvement in workflow reliability by surfacing deprecated operators and hidden failure modes

Stronger governance and auditability with orchestration assets unified under Unity Catalog.

Faster onboarding through reusable, modular Databricks workflow components.

Immediate access to Databricks AI/BI workflows, boosting analyst and engineering productivity.

POC cycles reduced to 2–4 weeks, accelerating platform adoption and time-to-value