DP2BRICKS

Modernize Dataproc at Scale Automatically, Reliably, End-to-End

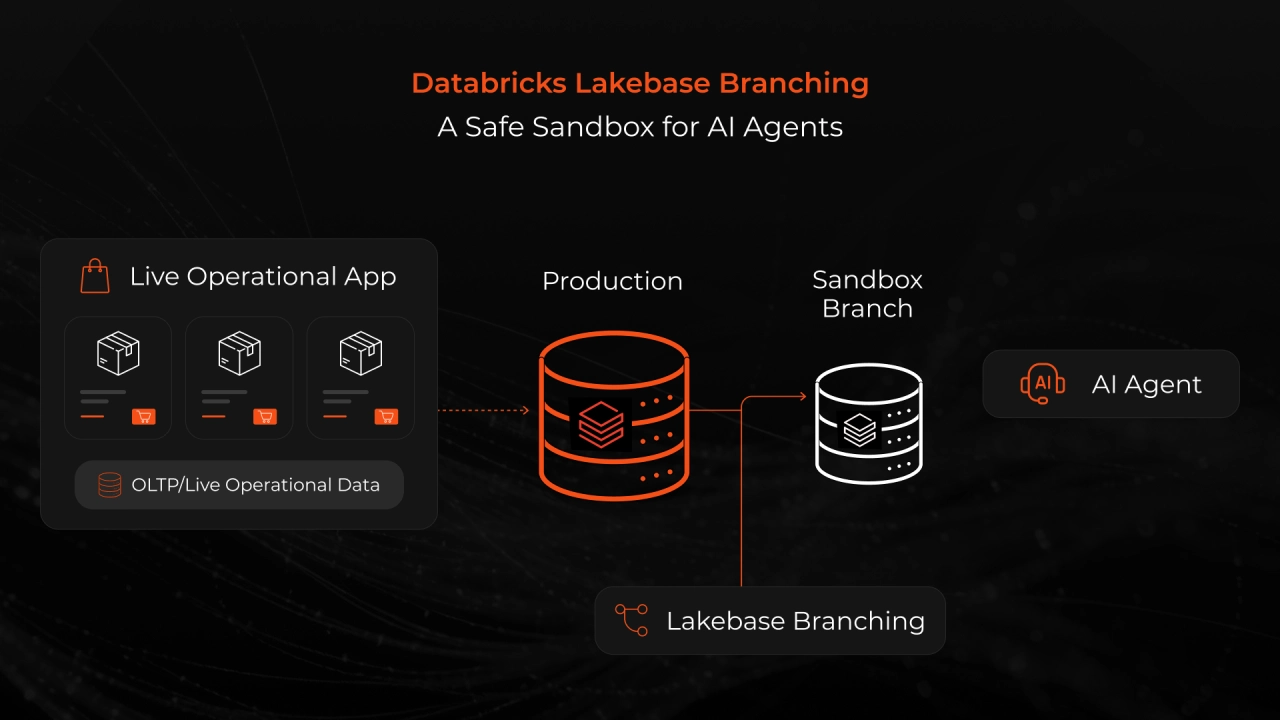

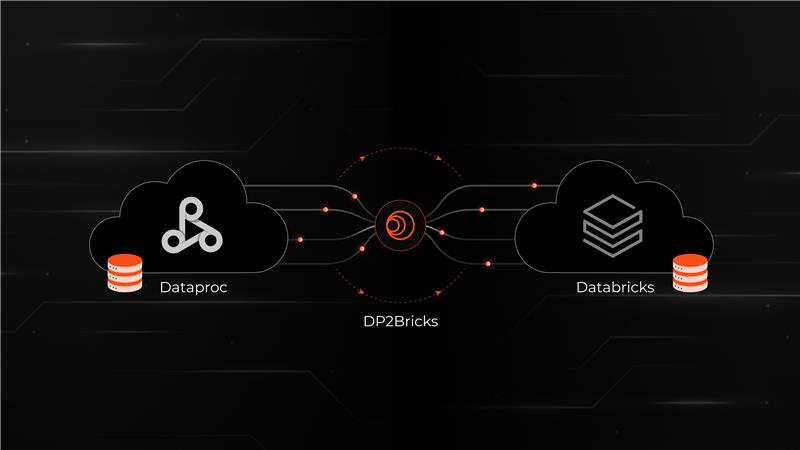

Dataproc to Databricks

A migration accelerator that streamlines the shift from Dataproc's cluster-heavy architecture to Databricks' unified compute model for a predictable, governed modernization workflow.

Why migrate from

Dataproc to Databricks?

Dataproc handles small pipelines well, but growing workloads lead to fragmented clusters, duplicated logic, and inconsistent runtimes. Manual dependency management slows delivery, rising volumes increase costs, and governance stays scattered.

Databricks solves this with unified compute and Delta-native governance, but reaching it requires a structured, automated migration pathway.

DP2BRICKS provides exactly that: a governed, automated modernization engine for Dataproc workloads.

Customer Challenges Addressed by DP2BRICKS

Fragmented clusters lead to limited cross-team visibility.

High operational overhead and pipeline maintenance.

Manual logic causes metric drift, slow reporting, and unreliable analytics.

Lack of unified governance and lineage makes scaling difficult.

Unable to estimate migration effort, cost, or timelines, blocking modernization planning.

Core Capabilities

Spark Conversion & Optimization Engine

Spark Conversion & Optimization Engine

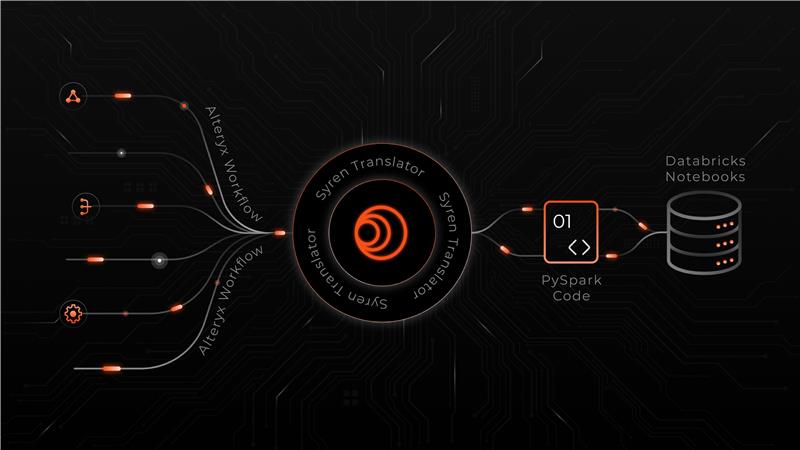

Transforms Dataproc Spark/Hive workloads into Databricks-ready formats, including Spark SQL rewrites, config normalization, Delta Lake adoption, and optimized cluster/job definitions.

Workload Classification & Dependency Mapping

Workload Classification & Dependency Mapping

Maps dependencies across jobs, libraries, JARs, UDFs, Airflow/Dataproc Workflow templates, and cluster configs, highlighting incompatibilities and grouping workloads for staged migration.

AI-Powered Intelligence

AI-Powered Intelligence

AI-driven insights to detect Spark anti-patterns, recommend efficient Databricks alternatives (Photon, Delta Lake, Auto-Optimize), and automatically fix common migration blockers.

Performance Monitoring & Alerts

Performance Monitoring & Alerts

Near real-time visibility into migration readiness with automated detection of performance bottlenecks, deprecated APIs, non-portable configurations, and required optimizations.

Data Governance

& Security

Data Governance

& Security

Unity Catalog ensures secure and compliant handling of notebooks, SQL logic, libraries, job configurations, and lineage during migration.

Data Ingestion & Standardization

Data Ingestion & Standardization

Ingestion of Dataproc Spark jobs, PySpark notebooks, Hive SQL scripts, and workflow metadata into a normalized structure for automated analysis and migration planning.

Technical Capabilities

Dataproc Workload Ingestion & Normalization

Spark & Hadoop Code Translation Engine

Cluster-to-Job Refactoring Framework

Intelligent Error Classification & Self-Healing Execution

Why Syren + Databricks?

A Unified, Reliable Spark Runtime

Move from fragmented Dataproc clusters to a fully managed, autoscaling Databricks environment.

Accelerated Migration with Intelligent Refactoring

AI-assisted code translation and pattern detection streamline Spark, Hadoop, & PySpark modernization.

Lower Operational Overhead

Automated dependency mapping, job inventorying, and pipeline standardization.

Confidence Through Test-Driven Validation

Side-by-side output comparisons using Delta Lake ensure every migrated job behaves consistently before production rollout.

Governed, Secure Scaling

Unity Catalog delivers unified governance (lineage, access control, auditing), enabling compliant, enterprise-wide scaling.

Faster Time-to-Value on Databricks

Eliminate migration complexity and operational blockers to help teams adopt Databricks capabilities faster.

Value Delivered by DP2Bricks

Lower migration effort through automated Spark/Hadoop conversion to Databricks-ready code.

Faster runtimes by eliminating inefficient patterns and optimizing transformations.

Reduction in operational overhead by consolidating fragmented Dataproc pipelines.

Faster adoption of Databricks AI/ML/BI with ready-to-run, cluster-free workflows.

Shorter modernization timelines using reusable ingestion, translation, and validation modules.

Improved cost predictability by replacing always on Dataproc clusters with serverless autoscaling compute.